Decoding Motor Intent from Neural Signals

Comparing MLP, 2D CNN, LSTM, and Transformer architectures for brain-computer interface decoding

This project decodes hand position and velocity from 95-channel neural spike data recorded during a center-out reaching task.

The goal: predict where a monkey is moving its hand using only brain signals. Four deep learning architectures were trained

and compared on the same dataset (contdata95.mat), sampled at 50ms bins.

View source on GitHub

Live Decoding Replay

Watch each model attempt to decode hand position from neural activity in real-time. Blue = actual trajectory, red = model prediction.

Architecture Comparison

Pearson correlation between predicted and actual kinematics on the held-out test set.

Model Architectures

Fully Connected NN

2D CNN

LSTM

Transformer

Key Insight

Regularization matters more than architecture complexity. The original MLP used 0.5 dropout, which hurt learning. Reducing dropout to 0.3 brought the single-bin MLP to 0.88 average correlation. The LSTM dominates at 0.987 correlation, benefiting from recurrent memory over 32 time steps. The 2D CNN (0.79) captures spatio-temporal patterns but struggles with position decoding. The Transformer (0.74) underperforms here likely due to limited hyperparameter tuning and the small dataset size.

Original Results

Decoded trajectories from the notebooks. Blue = actual, Red = predicted.

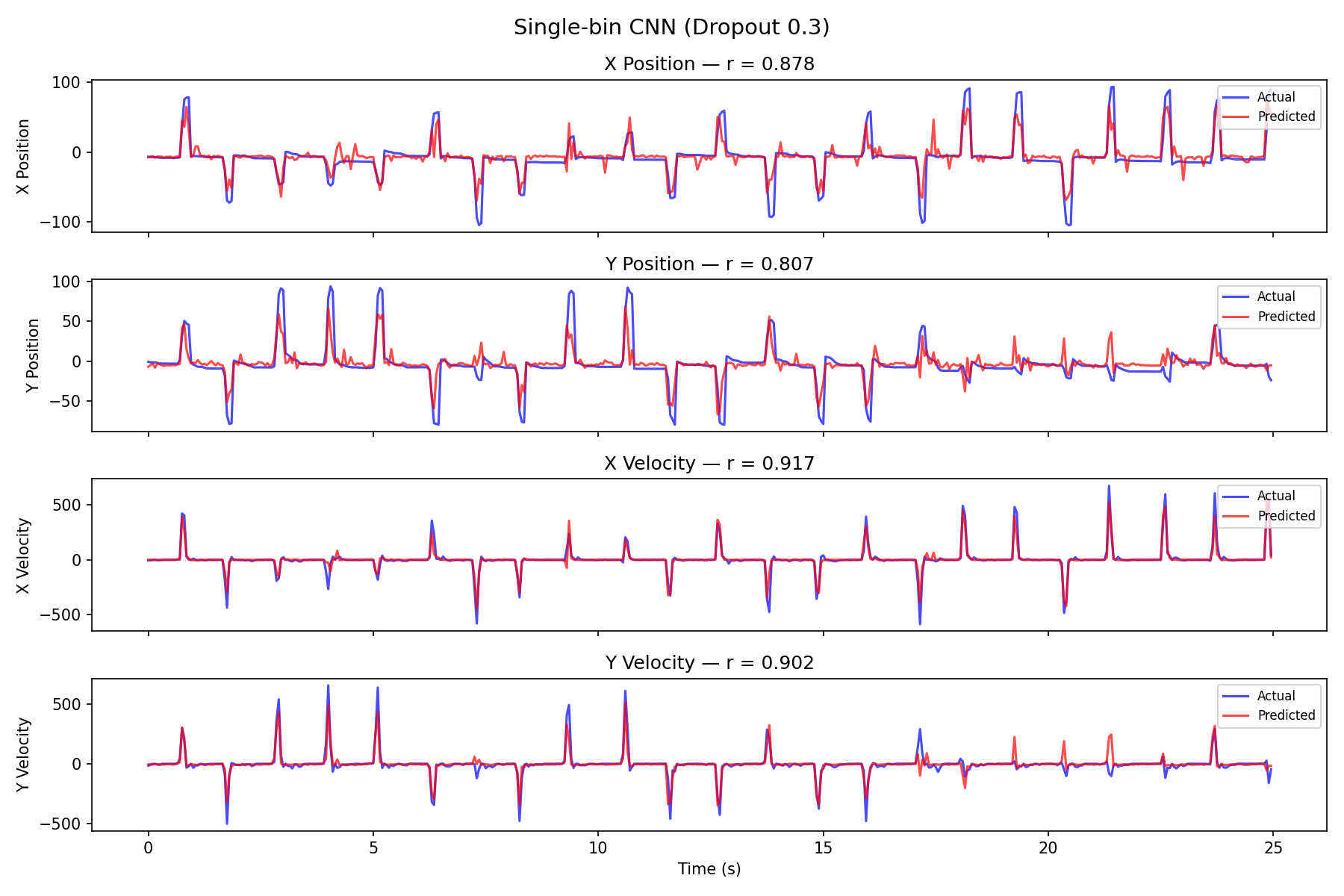

MLP Decode

With proper regularization (dropout 0.3), the MLP achieves 0.876 average correlation from single time-bin inputs.

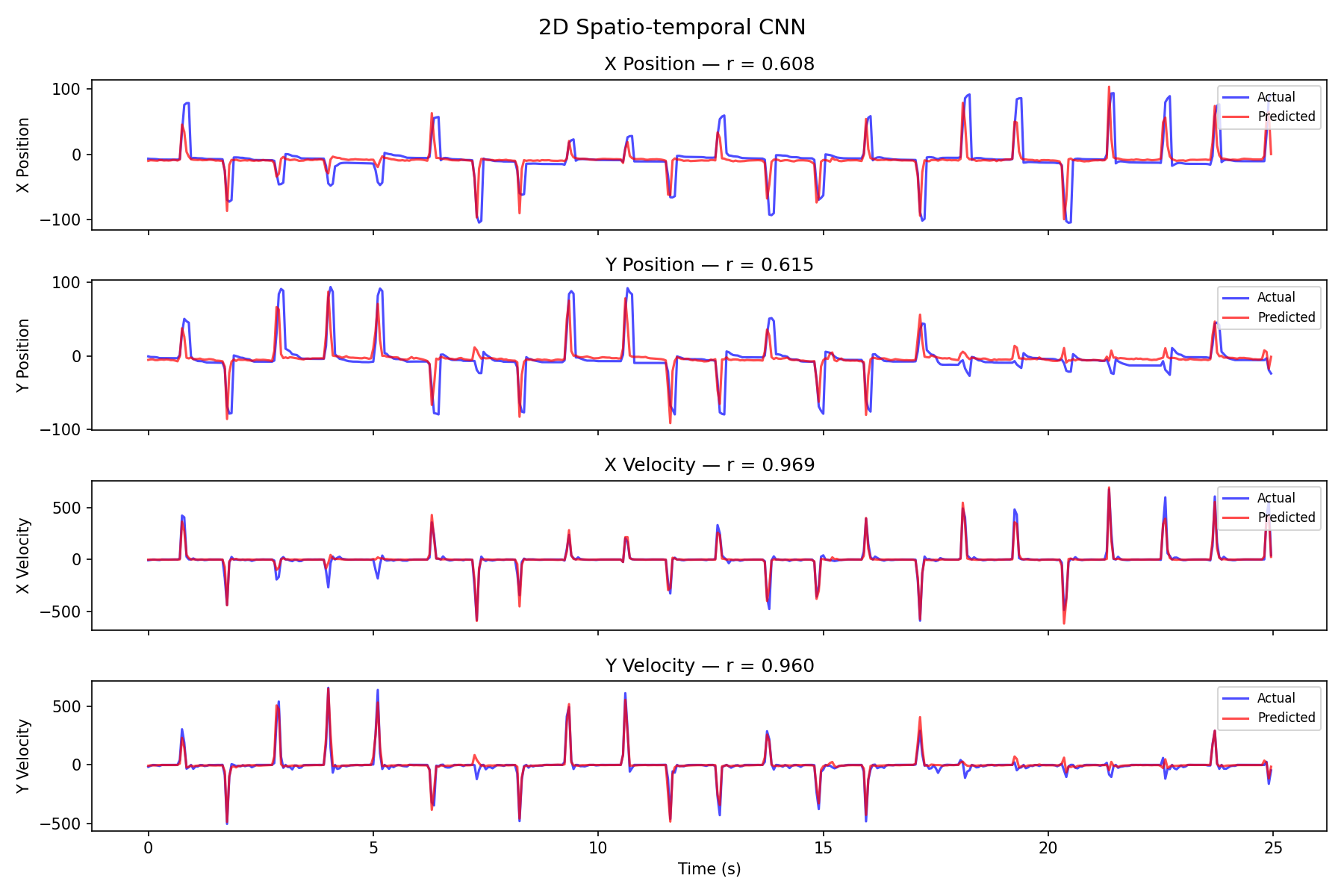

2D CNN Decode

The 2D CNN excels at velocity (0.97) but is weaker on position (0.61), averaging 0.79 correlation.

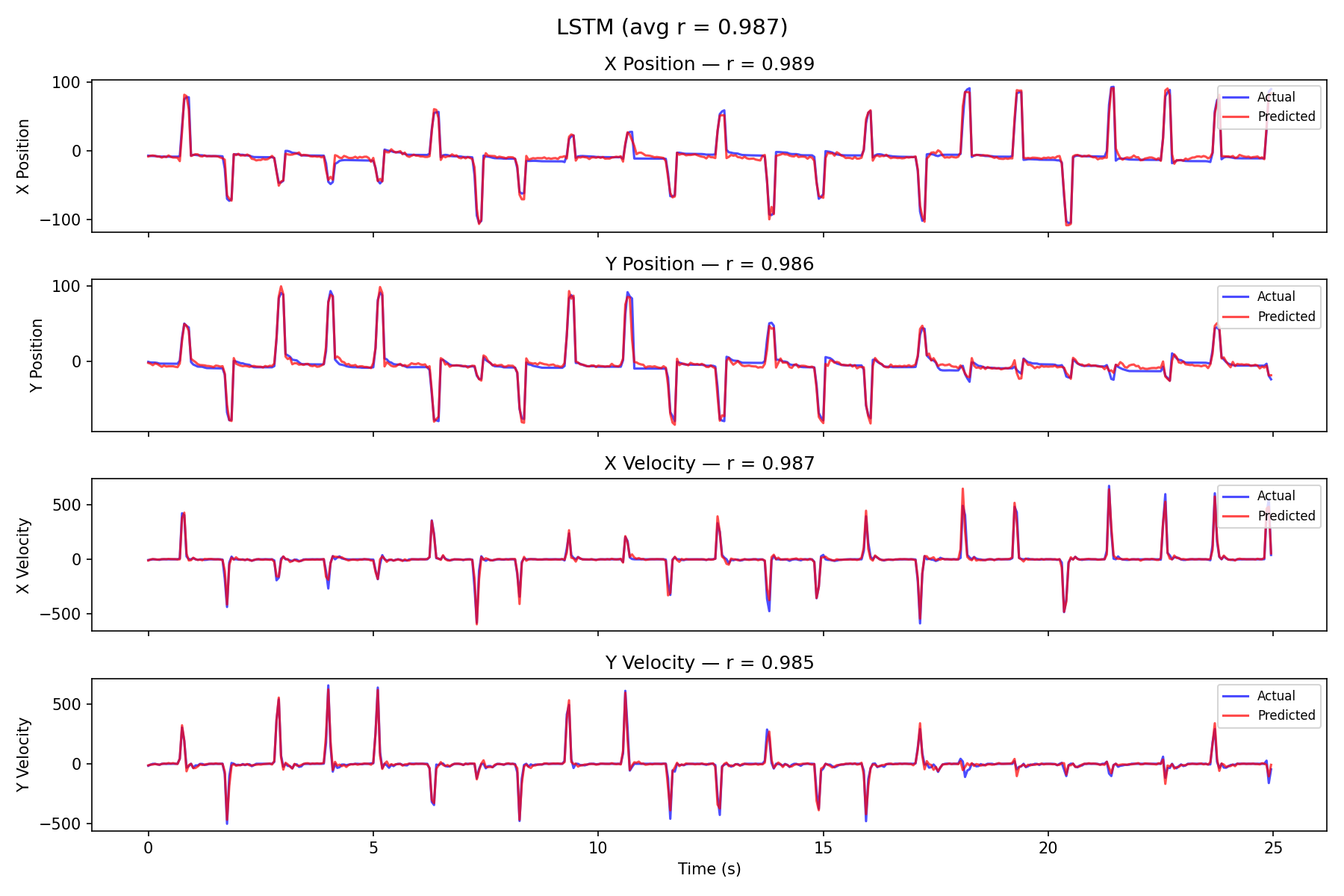

LSTM Decode

The LSTM nearly perfectly tracks the actual trajectory, achieving 0.987 average correlation.

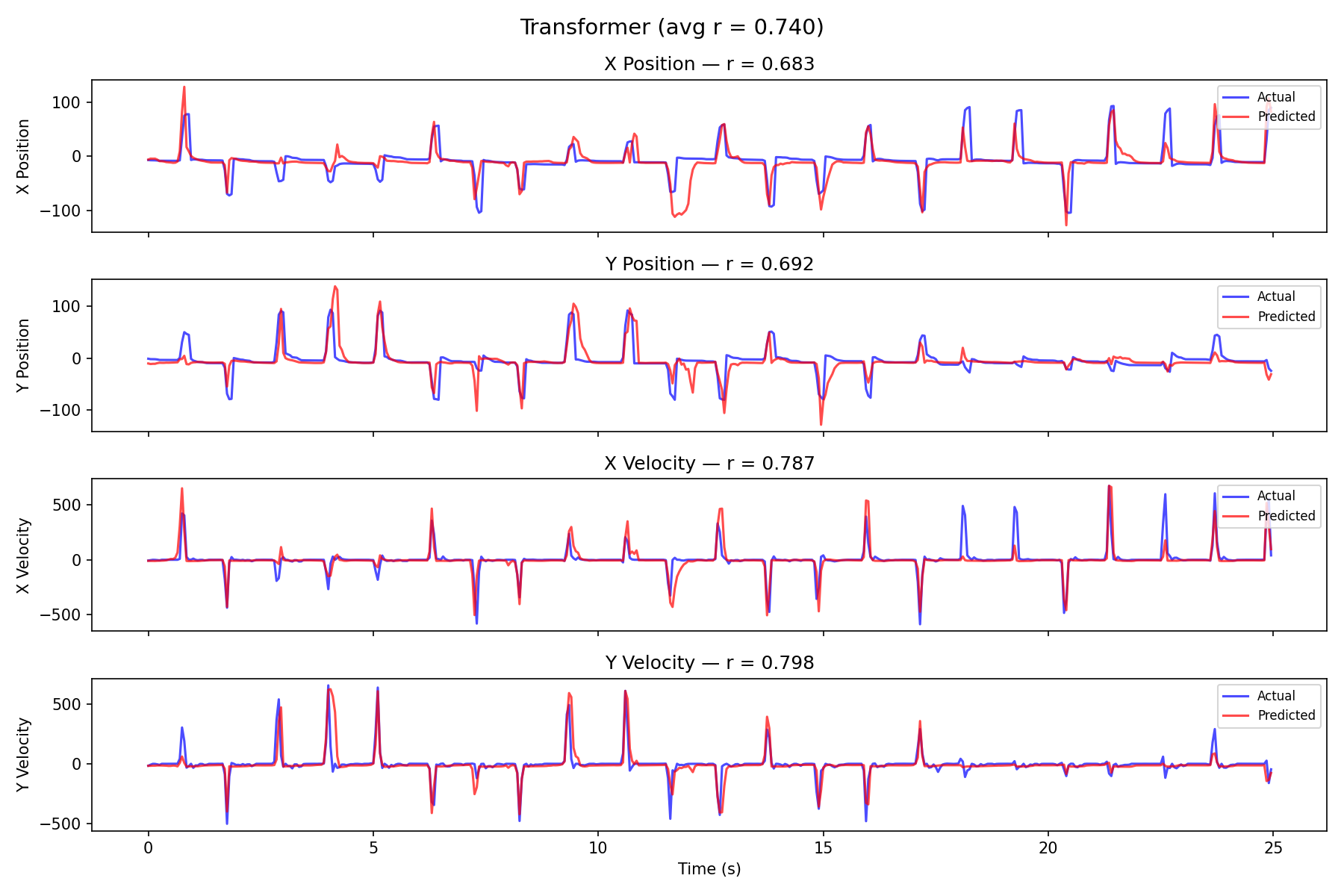

Transformer Decode

The Transformer achieves 0.74 average correlation, likely limited by overfitting on the small dataset.